In-App

Intelligence.

HDK agents are part of your application logic — same process, same memory, same data structures as the rest of your code. They don’t need context shipped to them across a network seam — no vector DBs, embedding pipelines, permission proxies, context assemblers. Agents are already where the context lives.

Full-stack agentic AI framework for llama.cpp ↗

Experience it on your machine.

A harness we built with HDK. A private deep research assistant which

runs in your terminal. Use /scan PATH to ground against

files on your disk OR /web if you want to research

online (you’ll need a Tavily search API key).

AI as a feature, not an API call.

The framework’s whole surface area, in five tabs. No elaborate infrastructure, no complex plumbing.

import { useAgent } from '@lloyal-labs/lloyal-agents';

import { reportTool } from '@lloyal-labs/rig';

import { feedback } from './sources';

// The atomic unit. Owns a KV branch, calls tools to extend that

// branch with evidence, returns a result via the terminal tool.

// Its branch is pruned automatically on return — KV stays continuous.

const agent = yield* useAgent({

systemPrompt: "Customer-feedback analyst. Extract themes with evidence.",

task: "Summarize this week's feedback into 3 themes with citations.",

tools: [...feedback.tools, reportTool],

terminalToolName: 'report',

});

console.log(agent.result);import { agentPool, parallel } from '@lloyal-labs/lloyal-agents';

import { reportTool } from '@lloyal-labs/rig';

import { subjects, sources, workerPrompt, today } from './harness';

// N agents fan out at once. Each forks from the parent KV in O(1)

// (metadata-only, zero tensor copy). They share the parent prefix;

// only their suffixes live in unique cells. Completed agents prune

// the moment they return — KV stays continuous through the run.

//

// systemPrompt is a render call, not a static string — each agent's

// prompt is parameterized with its peers' subjects so it stays focused.

// See the prompts tab for what workerPrompt() actually renders.

const pool = yield* agentPool({

orchestrate: parallel(

subjects.map(s => ({

content: `Investigate: ${s}`,

systemPrompt: workerPrompt({

subject: s,

siblings: subjects.filter(o => o !== s),

maxTurns: 12,

date: today(),

}),

}))

),

tools: [...sources.tools, reportTool],

terminalToolName: 'report',

});import { agentPool, chain } from '@lloyal-labs/lloyal-agents';

import { reportTool } from '@lloyal-labs/rig';

import { sources, workerPrompt } from './harness';

// Sequential agents. Each one's findings extend the spine; the

// next agent forks from the extended spine and sees prior findings

// in attention — not via re-prompting. KV grows; no re-encoding.

//

// workerPrompt gets the taskIndex, so step 0 establishes vocabulary

// and steps 1+ get a "build on prior research" preamble. See prompts.

const steps = ['discover', 'verify', 'distill'];

const pool = yield* agentPool({

orchestrate: chain(steps, (step, i) => ({

task: {

content: `Step: ${step}`,

systemPrompt: workerPrompt({ subject: step, taskIndex: i, maxTurns: 8 }),

},

userContent: `${step} findings:`,

})),

tools: [...sources.tools, reportTool],

terminalToolName: 'report',

});import { agentPool, dag } from '@lloyal-labs/lloyal-agents';

import { reportTool } from '@lloyal-labs/rig';

import { sources, investigatePrompt, comparePrompt, synthPrompt } from './harness';

// Declared dependency topology. Independent nodes run in parallel;

// dependent nodes wait, then fork from a spine that carries their

// dependencies' findings in attention. Each role gets its own prompt

// renderer — investigator, analyst, editor are all functions of the

// node's per-spawn data. See the prompts tab.

const pool = yield* agentPool({

orchestrate: dag([

{ id: "research_x", task: { content: "Research subject X", systemPrompt: investigatePrompt({ subject: 'X' }) } },

{ id: "research_y", task: { content: "Research subject Y", systemPrompt: investigatePrompt({ subject: 'Y' }) } },

{ id: "compare", task: { content: "Compare X vs Y", systemPrompt: comparePrompt({ x: 'X', y: 'Y', axis: 'cost' }) },

dependsOn: ["research_x", "research_y"] },

{ id: "synthesize", task: { content: "Write the report", systemPrompt: synthPrompt({ subjects: ['X', 'Y'] }) },

dependsOn: ["compare"] },

]),

tools: [...sources.tools, reportTool],

terminalToolName: 'report',

});import { withSpine, agentPool, useAgent, parallel } from '@lloyal-labs/lloyal-agents';

import { reportTool } from '@lloyal-labs/rig';

import { query, researchTasks, sources, installedApps, playbooks, synthPrompt } from './harness';

// The spine is a KV trunk that persists across multiple pools and agents.

// Findings accumulate on the spine; every fork from the spine inherits the

// full trajectory via metadata-only KV sharing. This is Continuous Context

// — downstream agents see what upstream agents found in attention,

// not via re-prompting or a passed-around findings array.

yield* withSpine(

{

parent: session.trunk ?? undefined,

systemPrompt: playbooks({ // catalog reshapes per installed apps

hasWeb: installedApps.has('web'),

hasCorpus: installedApps.has('corpus'),

}),

tools: [...sources.tools, reportTool],

},

function* (spine) {

// Research phase: N parallel agents extend the spine with findings.

yield* agentPool({

parent: spine,

orchestrate: parallel(researchTasks),

tools: [...sources.tools, reportTool],

terminalToolName: 'report',

pruneOnReturn: true, // free KV the moment each agent returns

});

// Synth phase: forks from the same spine. Sees every research

// finding in attention — no re-prompting, no findings array.

const synth = yield* useAgent({

parent: spine,

systemPrompt: synthPrompt({ query, agentCount: researchTasks.length }),

task: "Synthesize the findings above into a final report.",

tools: [reportTool],

terminalToolName: 'report',

});

return synth.result;

},

);import { call } from 'effection';

import { useAgent } from '@lloyal-labs/lloyal-agents';

import { WebSource, CorpusSource, createReranker, reportTool } from '@lloyal-labs/rig';

import { workerPrompt, today } from './harness';

// The Reranker is the cross-encoder focal lens. One instance feeds

// every source's retrieval pipeline. At each tool call it scores

// candidate chunks against the agent's current task and admits only

// verbatim top-K within a token budget. Never paraphrased, never

// summarized — context grows by exactly what the model needs.

const reranker = yield* call(() => createReranker({ modelPath: rerankerPath }));

// Sources expose retrieval tools. One contract for any data —

// files, SQL, web, your records. Each source binds the shared

// reranker via bind(), then its tools become available to agents.

const web = new WebSource({ apiKey: tavilyKey });

const corpus = new CorpusSource(resources, chunks);

for (const s of [web, corpus]) yield* s.bind({ reranker });

// Retrieval happens mid-generation, not upfront. The agent decides

// what to look up based on what it's discovered so far; results

// land in its KV as conversation turns and inform the next decision.

const agent = yield* useAgent({

systemPrompt: workerPrompt({ subject: 'X', siblings: [], maxTurns: 12, date: today() }),

task: "Compare X and Y across recent benchmarks.",

tools: [...web.tools, ...corpus.tools, reportTool],

terminalToolName: 'report',

});import { Source, type Reranker } from '@lloyal-labs/rig';

import { type Operation } from 'effection';

import { SearchCustomersTool, ReadCustomerTool } from './customer-tools';

import { db } from './app';

// Bridge your app's own data. Implementing Source slots your retrieval

// into the same pipeline as built-in Web and Corpus — same reranker,

// same focal-lens admission, same KV-resident results. No vector DB,

// no embedding ETL, no parallel knowledge layer. Your customer table

// becomes an agent-accessible source just by writing this class.

class CustomerSource extends Source<{ reranker: Reranker }> {

readonly name = 'customers';

readonly searchTool = new SearchCustomersTool(db);

readonly readTool = new ReadCustomerTool(db);

get tools() { return [this.searchTool, this.readTool]; }

*bind(ctx: { reranker: Reranker }): Operation<void> {

this.searchTool.setReranker(ctx.reranker);

}

getChunks() { return db.customers.iterate(); }

}

// Drop in alongside any other source — same wiring, same scorer.

const customers = new CustomerSource();

yield* customers.bind({ reranker });import { renderTemplate } from '@lloyal-labs/lloyal-agents';

// The worker prompt is a function of pool topology. Each parallel

// agent is told who its peers are and what they're covering — so

// it stays focused on its own subject instead of drifting into

// "I'll also cover the others" territory. The model sees its

// peer list at the start of every turn; it can't forget the boundary.

const WORKER = `

You are investigating: <%= it.subject %>

PROCESS: broad search → fetch top results → report with evidence.

<% if (it.siblings.length > 0) { -%>

You are one of <%= it.siblings.length + 1 %> parallel agents. The others:

<%= it.siblings.map(function(s) { return '- ' + s }).join('\\n') %>

Stay focused on your own subject. The others handle the rest.

<% } -%>

You have <%= it.maxTurns %> tool calls. Today is <%= it.date %>.

`;

export const workerPrompt = (data) => renderTemplate(WORKER, data);

// Used in agentPool — each spawn passes its subject + the rest:

// systemPrompt: workerPrompt({ subject: 'X', siblings: ['Y','Z'], ... })import { renderTemplate } from '@lloyal-labs/lloyal-agents';

// The spine playbook block reshapes based on which apps the user

// has wired in. /web with no /scan = web-only catalog. /scan only

// = corpus-only. Both wired = both catalogs plus a cross-source

// boundary rule that's only relevant when the model has access

// to both palettes. The spine literally changes shape at runtime.

const PLAYBOOKS = `

# Playbooks

<% if (it.hasWeb) { -%>

## web_research

Tools: web_search, fetch_page

Use when: gathering evidence from the open web.

<% } -%>

<% if (it.hasCorpus) { -%>

## corpus_research

Tools: grep, read_file, search

Use when: investigating a local document corpus.

<% } -%>

<% if (it.hasWeb && it.hasCorpus) { -%>

# Cross-source rule

Apply only the playbook the agent system message names.

Do not mix tools across playbooks.

<% } -%>

`;

export const playbooks = (data) => renderTemplate(PLAYBOOKS, data);

// Used in withSpine — what's in the spine depends on what's installed:

// systemPrompt: playbooks({ hasWeb: apps.has('web'), hasCorpus: apps.has('corpus') })import { renderTemplate } from '@lloyal-labs/lloyal-agents';

// In a chain, the first task establishes vocabulary; later tasks

// build on what prior tasks surfaced. Task 0 gets a clean prompt.

// Tasks 1+ get an additional BUILD ON PRIOR RESEARCH preamble that

// points the model at conversation history it inherits via the spine

// — so it deepens or fills gaps instead of re-investigating.

const WORKER = `

You are investigating: <%= it.subject %>

<% if (it.taskIndex > 0) { -%>

BUILD ON PRIOR RESEARCH:

Prior tasks' findings are in your conversation history above —

inherited via the spine, not re-prompted. Read them first.

Identify named entities and open questions your task should

follow up on. Do not re-investigate covered ground; deepen

or fill gaps.

<% } -%>

You have <%= it.maxTurns %> tool calls.

`;

export const workerPrompt = (data) => renderTemplate(WORKER, data);

// Used in agentPool chain — the orchestrator passes the step index:

// chain(steps, (step, i) => ({

// task: { systemPrompt: workerPrompt({ subject: step, taskIndex: i, ... }) },

// }))Structured Concurrency

It's the foundation of concurrency in modern languages like Kotlin, Swift, Java (Project Loom), and C++26 — and an exact fit for GPU native agents orchestrating inference state. HDK’s TypeScript runtime uses Effection to orchestrate agent state over KV branches so every agent in a pool binds to a parent scope; cancellation propagates, teardown runs in reverse.

Continuous-Context Agents

HDK agents share GPU state, not strings. A fork is metadata-only — O(1), zero tensor copy. Child branches reuse the parent’s attention state rather than re-encoding lossy summaries. The result is 4.4× fewer tokens processed than a prompt-rebuilding approach, freeing compute for concurrent agent execution and longer retrieval loops. Active pruning keeps the context continuous.

Retrieval-Interleaved Generation

Agents don’t just retrieve — they assemble context

during generation: searching, reading, and reranking across your

app’s own data. The Source contract is the

assembly primitive — one shape for files, SQL, the web, or

user records. At every retrieval step a cross-encoder focal lens

admits only verbatim top-K chunks scoring against the

agent’s current hypothesis — bounded by token budget,

never summarized.

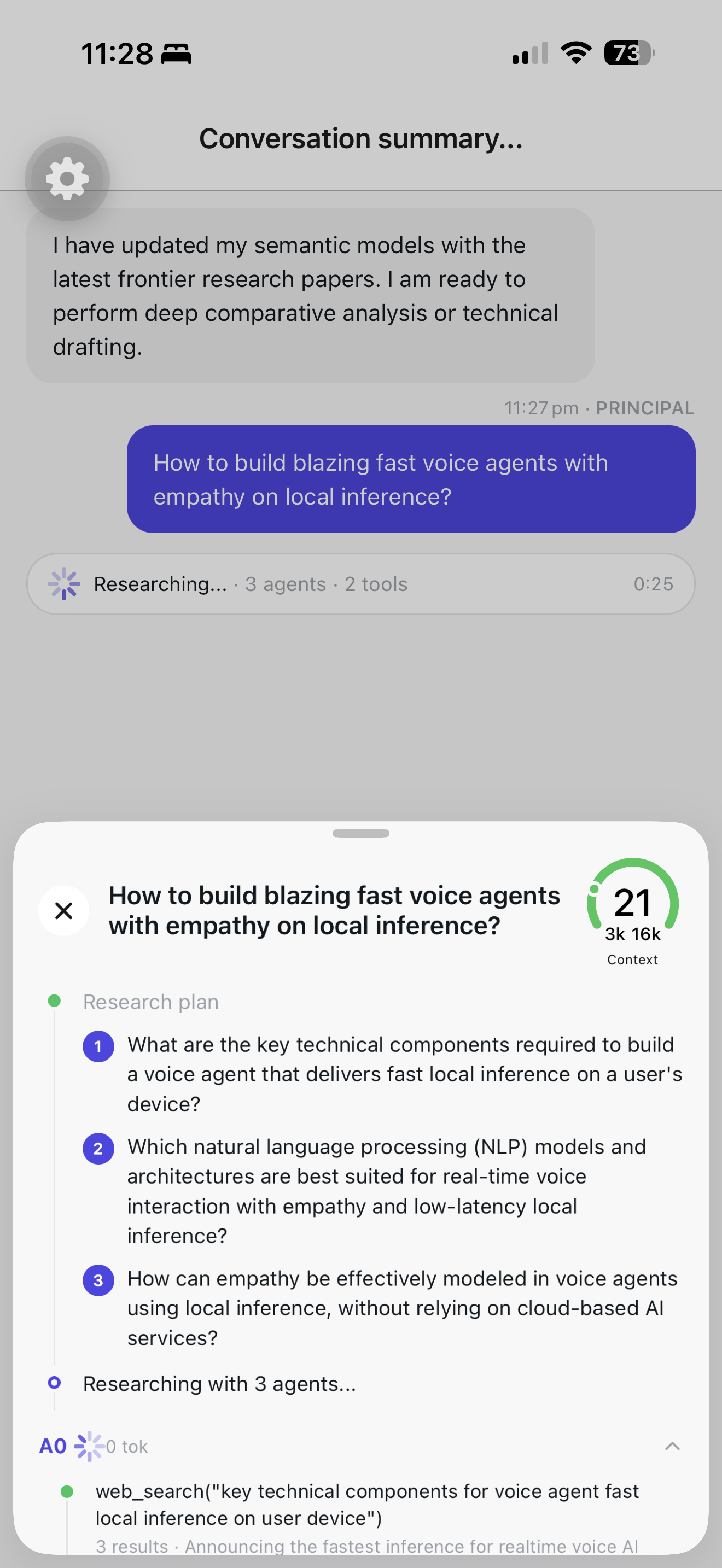

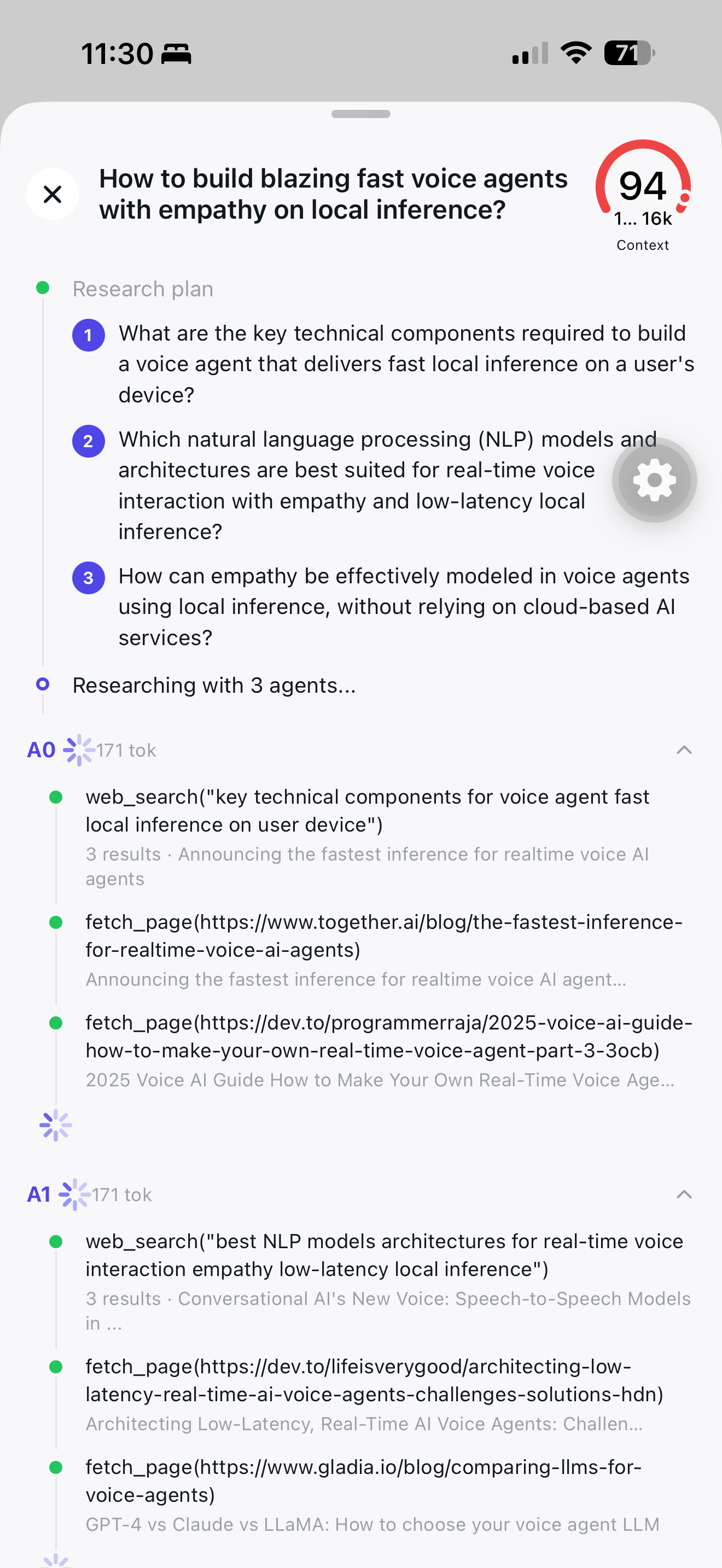

On-device. Multi-agent. Parallel.

Parallel agents running tools web_search and

fetch_page on iPhone — no API calls, no streaming

server, no cloud round-trip. The Node SDK is open source and ships

anywhere Node runs — server, CLI, or desktop via Electron or

Tauri. The native iOS & Android runtime is currently in

commercial preview.

Ships in your binary.

One install. Every store.

Cloud-API apps ship a UI through the store and keep the AI on someone else’s servers. With HDK, the whole product ships in one binary — agents, retrieval, inference — through every consumer channel: Mac App Store, Microsoft Store, iOS App Store, Google Play. Users click install. That’s it.

Ships with your release.

The AI ships with your app’s release — same version, same binary, same QA. The agent you tested is the agent running on every user’s device: laptop, iPhone, behind a firewall, on a plane. The model doesn’t silently update; deterministic replay reproduces production runs in dev with identical reasoning, not just identical outputs.

Sell it like software.

Price it however you want: one-time, subscription, or free. Your unit economics are uncapped — no provider takes a margin between you and your users. Your brand carries the experience, not someone else’s logo.

#include <lloyal/hdk.hpp>

// Runs on the equipment's own compute.

// Compiled native, no network in the loop.

auto pool = hdk::agent_pool({

.orchestrate = hdk::parallel(perceive, plan, monitor),

.tools = { control.dispatch_command },

.terminal_tool_name = "dispatch",

});

// Each cycle: observe, orient, decide, act.

for (;;) {

auto frame = hdk::fuse(camera, lidar, imu);

co_await pool.run(frame);

}On‑board intelligence.

Literally.

Enable the equipment you manufacture to run multi-agent Observe Orient Decide Act loops natively. No AI box, no networking overhead. Deploy models with 3D spatial reasoning that take sensor-fusion input and dispatch actuation as tool calls — autonomously, or with a human in the loop. Functional safety stays where it belongs: in your certified hardware layer. In development with launch partners, co-engineering toward 2026–2027 production cycles.